Multi-turn Question-Answering/Chatbot problems are one of the hottest topics in NLP field recently. The blog sum up some tradition papers and several recent papers.

Multi-view Response Selection for Human-Computer Conversation

Tags: multi views, general attention, disagreement loss, likelihood loss

The structure of the model is clear. The essence of the model in my view is multi-view and multi-loss. By applying two levels (context level and sentence level) information extractor, the model can gather two independent view of the whole context. Also, two loss functions can be seen as two views on selecting answers. The only drawback of the model, in my view, is that the matching operation comes too late, just at the end of the model.

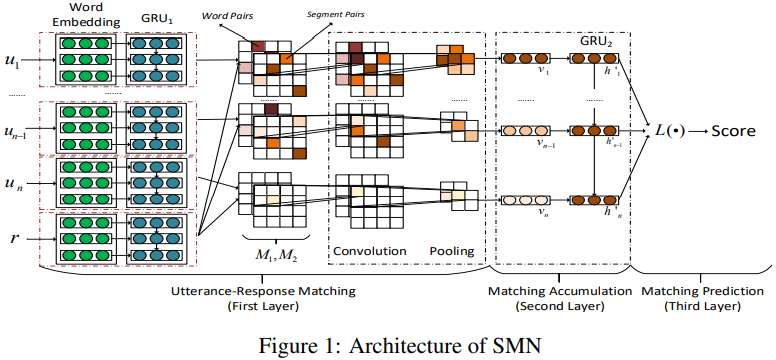

Sequential Matching Network A New Architecture for Multi-turn Response Selection in Retrieval-Based Chatbots (SMN)

Tags: multi level info, dot self attention, CNN filter

The model’s structure is basically clear. Just mention that the word pairs matrix comes from word embeddings rather than GRU which is the source of segment pair matrix. In the last layer, the author tried three methods for the L(.) function, the attention combination method works best, linear combination works worst, and last hidden state method works fair but fast. The author also use multi-view info to extract information from sentences, like what the previous paper did.